As universities discuss “AI sovereignty,” much of the conversation understandably focuses on local inference: purchasing GPUs, building clusters, and running open-weight models on institutional infrastructure. That can be a sensible strategy for some workloads. Local capacity can improve resilience, support privacy-sensitive use cases, and reduce dependence on a single external provider.

But local inference is only one part of institutional autonomy, and there are lower hanging fruit that likely should be picked first. A university is strategically dependent if its chats, documents, histories, workflows, and integrations are tied to one application stack or one vendor ecosystem. For that reason, we believe the more durable question is often not only “Where is the inference?” but also “Where is the data, and how easily can we move it?”

AI Sovereignty and Data Sovereignty Are Not the Same

Running inference locally can be valuable, but it is not necessarily the first step toward reducing vendor lock-in. Universities can often get quite far earlier by designing the less visible system layers well: middleware, orchestration, storage, APIs, and frontend integration. Those pieces may be less glamorous than a GPU cluster, but they are often what determine whether an institution can actually switch providers later without losing continuity.

Our view is that AI models are exchangeable. AI systems do not need to be. A university should be able to keep the same frontend applications, keep the same data storage, and use different model backends over time. Those backends may include commercial services, public-good providers, locally hosted open-weight models, or a mixture of all three.

- Keep the same frontend and user workflows

- Keep institutional data in portable storage

- Use different model backends behind the same system

- Mix open and closed ecosystems where each is most appropriate

- Focus on where the data lives, not only where inference runs

Why This Matters for Universities

From an administrative perspective, portability reduces procurement risk. It becomes easier to respond to budget changes, regulatory requirements, or geopolitical shifts when the institution is not forced to migrate its entire AI workflow every time the market changes.

From an engineering perspective, data sovereignty supports cleaner architecture. Applications can be designed so that user identity, document stores, chat history, audit trails, policy controls, and workflow logic remain in institutional or institution-chosen infrastructure, while model calls are treated as replaceable backend dependencies.

This is also why the system layer matters so much. Universities already have portals, LMS integrations, document repositories, and other operational systems that cannot simply be replaced overnight. A portable orchestration layer can let those current and even legacy applications continue to function while connecting them through APIs to a more flexible AI backend.

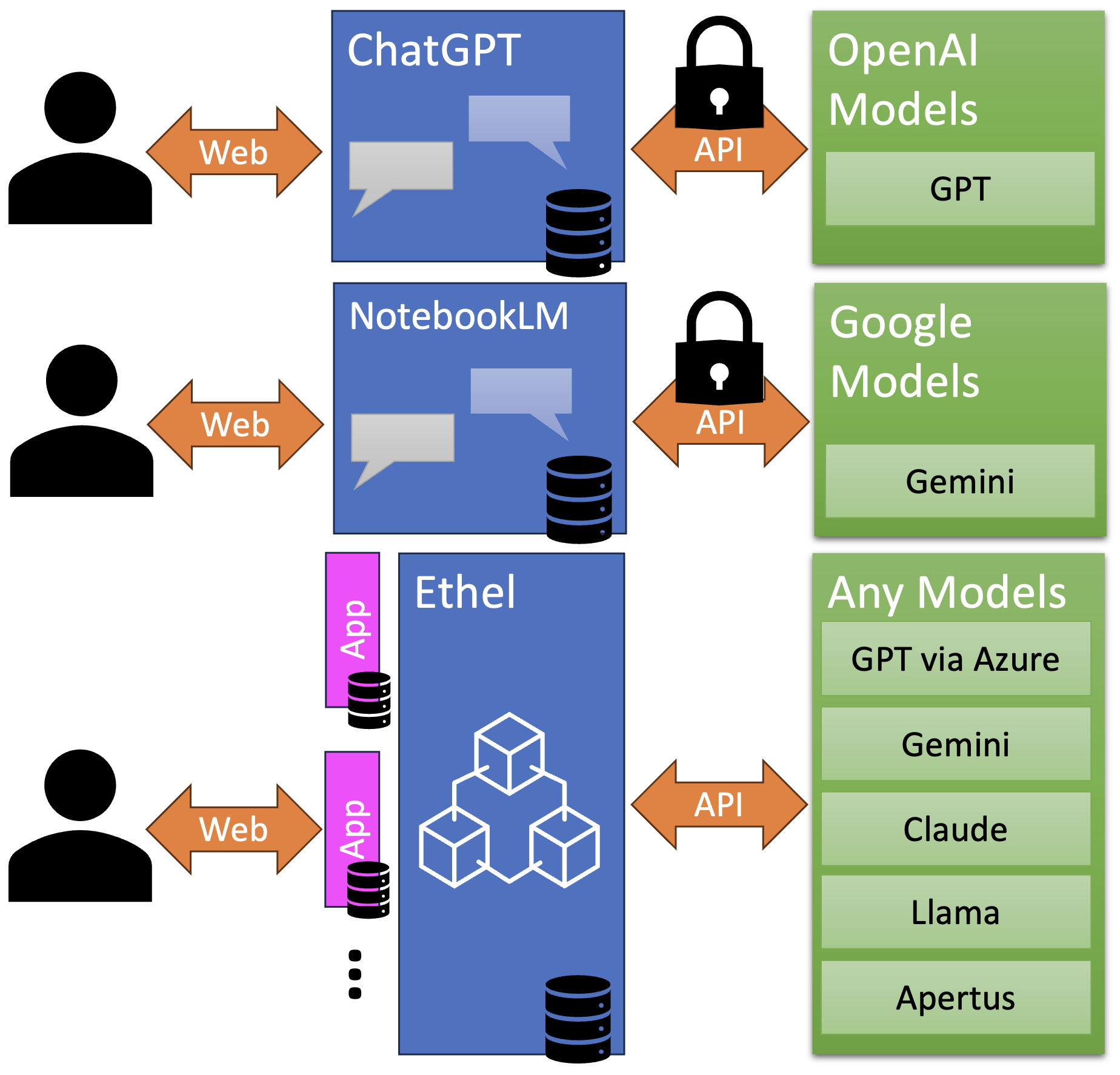

Vendor-tied systems deserve careful thought in this light. Products such as ChatGPT or NotebookLM can offer compelling experiences, but the accumulated value often lives inside the vendor’s application boundary: chats, uploaded documents, history, and interaction context. That is not a criticism of those products; it is a reminder that convenience today can become switching cost tomorrow.

A Hybrid Model May Be More Attainable

For many universities, a hybrid strategy may be more attainable and more sustainable than an all-or-nothing approach. Not every workload needs to run on local GPUs, and not every workload should be sent to a single public cloud vendor. Different tasks have different requirements for privacy, cost, latency, throughput, modality, quality, and feature support.

A hybrid system lets institutions choose pragmatically. They can use commercial models where those models are strongest, open-weight models where local control or predictable cost is more important, and switch between them as technology evolves. That also leaves room to use affordances that commercial systems may currently provide, without binding the institution’s entire architecture to a single model family or vendor roadmap.

- Use commercial models when they offer clear quality or feature advantages

- Use open-weight models where local control or policy requirements make sense

- Route different tasks to different backends without changing the user experience

- Preserve the option to switch providers as models, prices, and constraints change

How Ethel Approaches the Problem

Ethel is open-source and designed around this separation of concerns. It can run on-premise or in the cloud, whether with a university-operated installation, a public-good provider, or a commercial provider. The goal is not to bind institutions to one model vendor, but to give them a stable system layer in which data, workflows, and applications remain under institutional control.

In practical terms, that means Ethel can keep the same frontend applications and the same stored institutional data while using different backends behind the scenes. More than one model can be used within the same system. Models can be replaced over time with minimal disruption to users. Existing frontend applications, including legacy ones, can continue to interact through APIs rather than being discarded whenever the model landscape changes.

Ethel itself only needs CPUs on a standard Kubernetes setup. GPU-heavy inference does not need to be built into the institutional system layer. When local inference makes sense, Ethel can connect to it. When external models are the better fit, Ethel can connect to those as well. That flexibility is the point.

Looking Ahead

We do not see data sovereignty and AI sovereignty as opposing ideas. Local inference can be an important part of a university’s strategy, and institutions that invest in it may do so for good reasons. Our argument is simply that durable autonomy also depends on controlling the data layer and on building a system architecture that can absorb change.

If models are going to change, then universities should build systems that are ready for change. Keep the user experience stable. Keep the data portable. Keep the backend flexible. That, in our view, is a practical path toward a more resilient and sustainable AI ecosystem for higher education.